We guide clients’ decisions, quickly implement the right technologies with the right people, and keep them running for sustainable growth. Our battle-tested processes and methodology help companies with legacy systems get to the cloud faster, so they can be agile, reduce costs, and improve operational efficiencies. Want to learn more about improving data quality? Contact us today! TekStream accelerates clients’ digital transformation by navigating complex technology environments with a combination of technical expertise and staffing solutions. Product tip: Improving data pipeline processing in Splunk Enterprise.

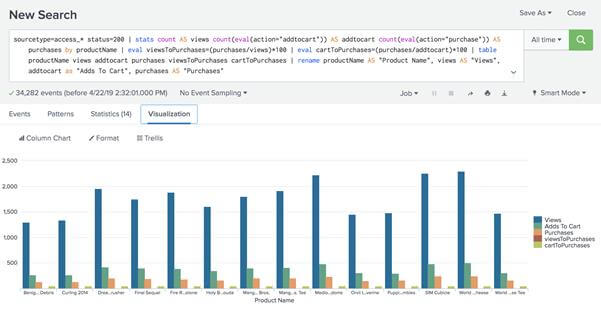

Tekstream blog: Data onboarding in Splunk.These resources might help you understand and implement this guidance: You can drill down into the data by clicking on one of the numbers in the columns. The results of the search should look like this, showing the number of line breaking issues, timestamp parsing issues, and aggregation issues for each of your source types. | rename data_sourcetype as Sourcetype, total_issues as "Total Issues" | stats count(eval(component="LineBreakingProcessor" OR component="DateParserVerbose" OR component="AggregatorMiningProcessor")) as total_issues dc(data_host) AS "Host Count" dc(data_source) AS "Source Count" count(eval(component="LineBreakingProcessor")) AS "Line Breaking Issues" count(eval(component="DateParserVerbose")) AS "Timestamp Parsing Issues" count(eval(component="AggregatorMiningProcessor")) AS "Aggregation Issues" by data_sourcetype | eval data_source=if((isnull(data_source) AND isnotnull(context_source)),context_source,data_source), data_host=if((isnull(data_host) AND isnotnull(context_host)),context_host,data_host), data_sourcetype=if((isnull(data_sourcetype) AND isnotnull(context_sourcetype)),context_sourcetype,data_sourcetype) Index=_internal splunk_server=* source=*splunkd.log* splunk_server=* (log_level=ERROR OR log_level=WARN) (component=AggregatorMiningProcessor OR component=DateParserVerbose OR component=LineBreakingProcessor) This search has been modified so that you can run this on any of your search heads: This search is a modified version of a search from Splunk Monitoring Console > Indexing > Inputs > Data Quality. Run the below search in your environment, with a timeframe of at least the last 15 minutes. I'm doing it this way so you will have explanations and can modify this as needed to make it work for you.You can check if your data is being parsed properly by searching on it, using the index and source type that your data source applies to. But that's what ends up being run so it should return anywhere those two keywords show up, but ONLY inside a1.txt or a3.txt. That's not one you have to type, that happens on the back end.

The way the subsearch works will be to run that little search first, and the list of source will get returned to the outside search, where it'll get incorporated like: index=X sourcetype=Y KEYWORD1 OR KEYWORD2 (source=a1.txt OR source=a3.txt) The only difference on the "inside" search is that we had to add search to the front of it. index=X sourcetype=Y (KEYWORD1 OR KEYWORD2) Now, we'll use that little search above as a subsearch inside a bigger search. This must be right or else the rest of this answer won't work. You should run this and confirm it returns, in your case, a1.txt and a3.txt. index=X sourcetype=Y SQLDB | dedup source | table source

You haven't provided much context, so you'll have to fill in some parts of this. I'm doing it this way so you will have explanations and can modify this as needed to make it work for you.įirst task is to build a search that returns the source fields of the files that have the SQLDB string in them.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed